Disk / Data Deduplication is a feature new to Windows in Server 2012 and has recently been improved in Server 2012 R2. Data Deduplication is based on the idea that if you have multiple copies of the same file you can only actually write one to disk and then just provide pointers to the copy.

What is Data Deduplication in Windows Server?

Data Deduplication, often called Dedup for short, is a feature that can help reduce the impact of redundant data on storage costs. When enabled, Data Deduplication optimizes free space on a volume by examining the data on the volume by looking for duplicated portions on the volume.

What are the requirements for Data Deduplication?

At a minimum, Data Deduplication should have 300 MB + 50 MB for each TB of logical data. For instance, if you are optimizing a 10 TB volume, you would need a minimum of 800 MB of memory allocated for deduplication ( 300 MB + 50 MB * 10 = 300 MB + 500 MB = 800 MB ).

How does file deduplication work?

Data deduplication works by comparing blocks of data or objects (files) in order to detect duplicates. Deduplication can take place at two levels — file and sub-file level. In some systems, only complete files are compared, which is called Single Instance Storage (SIS).

How old does a file have to be before it is deduplicated?

Default optimization policy: Minimum file age = 3 days.

How do I know if deduplication is enabled?

Monitoring the event log can also be helpful to understand deduplication events and status. To view deduplication events, in File Explorer, navigate to Applications and Services Logs, click Microsoft, click Windows, and then click Deduplication.

Why do you need data deduplication?

Data deduplication is important because it significantly reduces your storage space needs, saving you money and reducing how much bandwidth is wasted on transferring data to/from remote storage locations.

What are the disadvantages of deduplication?

Data Deduplication disadvantages 2) Loss of data integrity – Block-level deduplication solutions utilizing hashes create the possibility of hash collisions (identical hashes for different data blocks). This can cause loss of data integrity due to false positives, in the absence of additional in-built verification.

Why is data deduplication needed?

How do I run deduplication manually?

You can run every scheduled Data Deduplication job manually by using the following PowerShell cmdlets:

- Start-DedupJob : Starts a new Data Deduplication job.

- Stop-DedupJob : Stops a Data Deduplication job already in progress (or removes it from the queue)

How do you deduplicate data?

In the process of deduplication, extra copies of the same data are deleted, leaving only one copy to be stored. The data is analyzed to identify duplicate byte patterns and ensure the single instance is indeed the only file. Then, duplicates are replaced with a reference that points to the stored chunk.

How do I enable deduplication?

After you directly disable data deduplication, the volume remains in a deduplicated state and the existing deduplicated data is accessible. So to undo data deduplication on a volume, use the Start-DedupJob cmdlet and specify Unoptimization for the Type parameter first. Then you could disable it.

How do I enable data deduplication in Windows Server 2012 R2?

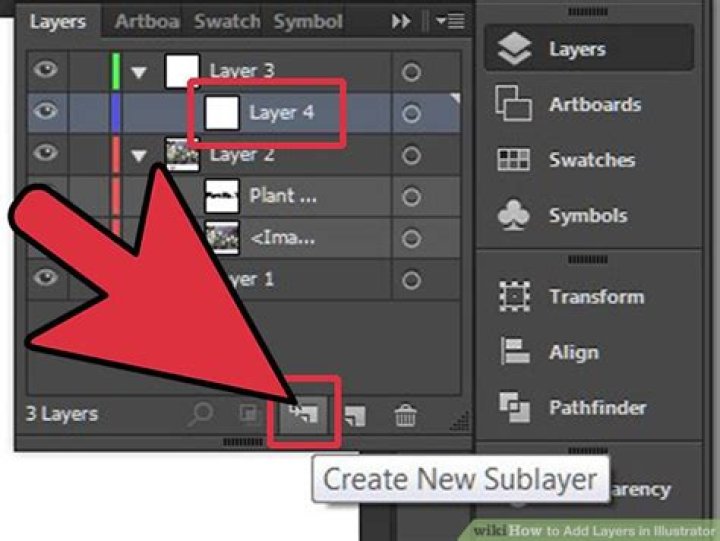

From the Server Manager dashboard, right-click a data volume and choose Configure Data Deduplication. The Deduplication Settings page appears. Enable data Deduplication. On Windows Server 2012 R2: In the Data deduplication box, select the workload you want to host on the volume.

How do I configure data deduplication in Server Manager?

There are three Usage Types included with Data Deduplication. Select File and Storage Services in Server Manager. Select Volumes from File and Storage Services . Right-click the desired volume and select Configure Data Deduplication . Select the desired Usage Type from the drop-down box and select OK .

What is the maximum deduplicated volume size in Windows Server 2012 R2?

In Windows Server 2012 and Windows Server 2012 R2, volumes had to be carefully sized to ensure that Data Deduplication could keep up with the churn on the volume. This typically meant that the average maximum size of a deduplicated volume for a high-churn workload was 1-2 TB, and the absolute maximum recommended size was 10 TB.

How accurate is Windows Server deduplication?

Microsoft IT has been deploying Windows Server with deduplication for the last year and they reported some actual savings numbers. These numbers validate that our analysis of typical data is pretty accurate.